Ensuring the quality and integrity of medical images is crucial for maintaining diagnostic accuracy in deep learning-based Computer-Aided Diagnosis and Computer-Aided Detection (CAD) systems. Covariate shifts — subtle variations in data distribution caused by different imaging devices or settings — can severely degrade model performance, similar to the effects of adversarial attacks. Therefore, it is vital to have a lightweight, fast method to assess the quality of these images before feeding them into CAD models. AdverX-Ray addresses this need by serving as an image quality assessment layer, designed to detect covariate shifts effectively.

This Adversarial Variational Autoencoder prioritizes the discriminator's role, using the generator's suboptimal outputs as negative samples to fine-tune the discriminator's ability to identify high-frequency artifacts. Images generated by adversarial networks often exhibit severe high-frequency artifacts, leading the discriminator to focus excessively on these components. This makes the discriminator ideal for this task. Trained on patches from X-ray images of specific machine models, AdverX-Ray can evaluate whether a scan matches the training distribution or if a scan from the same machine was captured under different settings. Extensive comparisons with various OOD detection methods show that AdverX-Ray significantly outperforms existing techniques, achieving a 96.2% average AUROC using only 64 random patches from an X-ray. Its lightweight and fast architecture makes it suitable for real-time applications, enhancing the reliability of medical imaging systems.

Medical X-ray images contain covariate factors that can compromise image quality and diagnostic accuracy. It is crucial to be able to detect changes in the imaging system (or faulty behavior) that present themselves as OOD covariate shifts in the images.

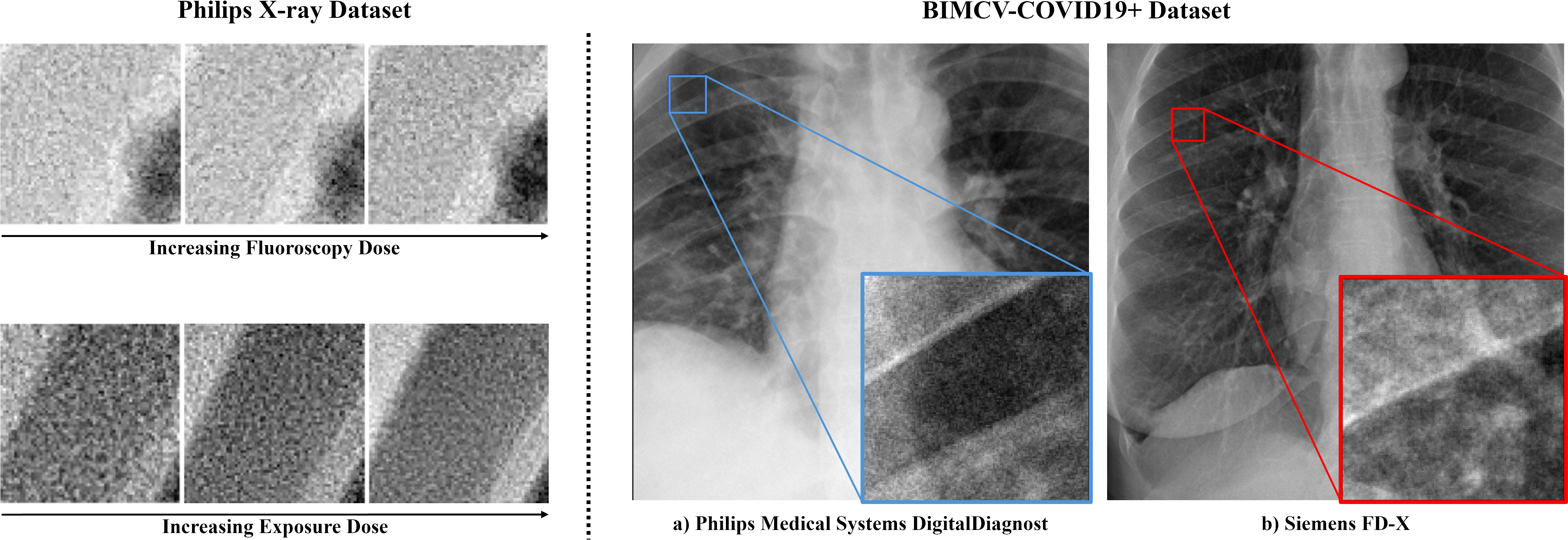

On the left, patches from the Philips X-ray dataset, showcasing different fluoroscopy and exposure doses. On the right, example chest X-ray images and patches from the BIMCV-COVID19+, each from a different machine.

To simulate real covariate shifts in X-ray imaging without access to OOD artifacts or faulty images, capture settings were altered in an X-Ray scanner to capture subtle variations in the images. A new dataset was created using an Azurion Image-Guided Therapy (IGT) system, capturing 12-bit DICOM X-ray images of a standard test object (a clock) across different dose levels and source-image distances (SID) in pulsed fluoroscopy and full radiation modes. Six primary imaging modes were defined, ranging from normal-dose exposures at 110 cm SID (Mode 0, considered ID) to low-dose fluoroscopy at 90 cm SID (Mode 5, the most OOD). The dataset, comprising 18 modes across two environments, is publicly available with the paper.

The dataset, sourced from the Medical Image Data Bank of the Valencian Community (BIMCV), includes CR, DX, and CT images of COVID-19 patients alongside their clinical findings and reports. It comprises 1,380 CX, 885 DX, and 163 CT studies from 1,311 COVID-19+ patients, totaling 3,141 X-ray and 2,239 CT images. This study focuses exclusively on the X-ray scans, grouped by 29 machine models to simulate real-world scenarios where models trained on one machine's data must process images from others, risking performance degradation. For experiments, five distinct ID sets were defined, each based on images from the five machines with the highest scan counts.

A common generative-based OOD detection approach uses trained models to identify unseen samples, while adversarially trained discriminators define boundaries for ID sets by estimating the probability of a sample being real (ID) or synthetic (OOD). Building on this, AdverX-Ray is introduced as an X-ray-specific Adversarial VAE framework that detects covariate shifts by processing batches of patches from X-ray scans. AdverX-Ray is a modified version of the Discriminative Covariate Shift Patch-based Network (DisCoPatch).

AdverX-Ray combines generative and reconstruction-based methods to train a discriminator for unsupervised OOD detection. The VAE minimizes the Evidence Lower Bound (ELBO) loss while generating samples and reconstructions designed to challenge the discriminator. Unlike standard GANs, AdverX-Ray's discriminator is trained to distinguish not only real versus generated images but also reconstructed images. This dual training addresses key weaknesses: VAEs often produce reconstructions lacking high-frequency details crucial for detecting shifts like blurriness, while GANs overly emphasize high-frequency differences, risking neglect of low-frequency content.

AdverX-Ray aligns the discriminator's understanding with the ID frequency spectrum by balancing training on both types of images and refining their realism. Batch normalization further enhances the discriminator by leveraging batch statistics to differentiate distributions, modeling correlations across patches within scans and capturing global X-ray structures. Although this method processes scans individually, it remains efficient due to the discriminator's lightweight design, offering a robust mechanism for OOD detection.

The results highlight the performance of OOD detection methods in the X-ray setting, where varying acquisition parameters can introduce heteroscedastic noise into the signal. Covariate shifts in this context are often intractable and generally not perceptible to non-specialists. However, with the exception of the GLOW model trained on typicality, all other methods effectively detect shifts in high-level image statistics.

| Methods | Mode 1 | Mode 2 | Mode 3 | Mode 4 | Mode 5 | Average AUROC↑/FPR95↓ |

|---|---|---|---|---|---|---|

| DDPM (T250: LPIPS) | 86.4 | 88.5 | 84.2 | 89.3 | 96.5 | 89.0 / 69.4 |

| DDPM (T250: LPIPS + MSE) | 79.9 | 83.0 | 81.1 | 89.0 | 95.8 | 85.8 / 67.3 |

| VAE (ELBO) | 69.7 | 96.1 | 88.9 | 91.3 | 98.1 | 88.8 / 25.0 |

| AVAE (ELBO + Adv Loss) | 65.5 | 94.8 | 86.5 | 89.5 | 97.2 | 86.7 / 28.9 |

| GLOW (LL) | 89.3 | 98.8 | 99.8 | 99.9 | 100.0 | 97.6 / 8.7 |

| GLOW (Typicality) | 30.4 | 16.5 | 15.1 | 10.4 | 11.1 | 16.7 / 99.8 |

| AdverX-Ray (Proposed) | 99.5 | 99.9 | 99.9 | 100.0 | 100.0 | 100.0 / 0.0 |

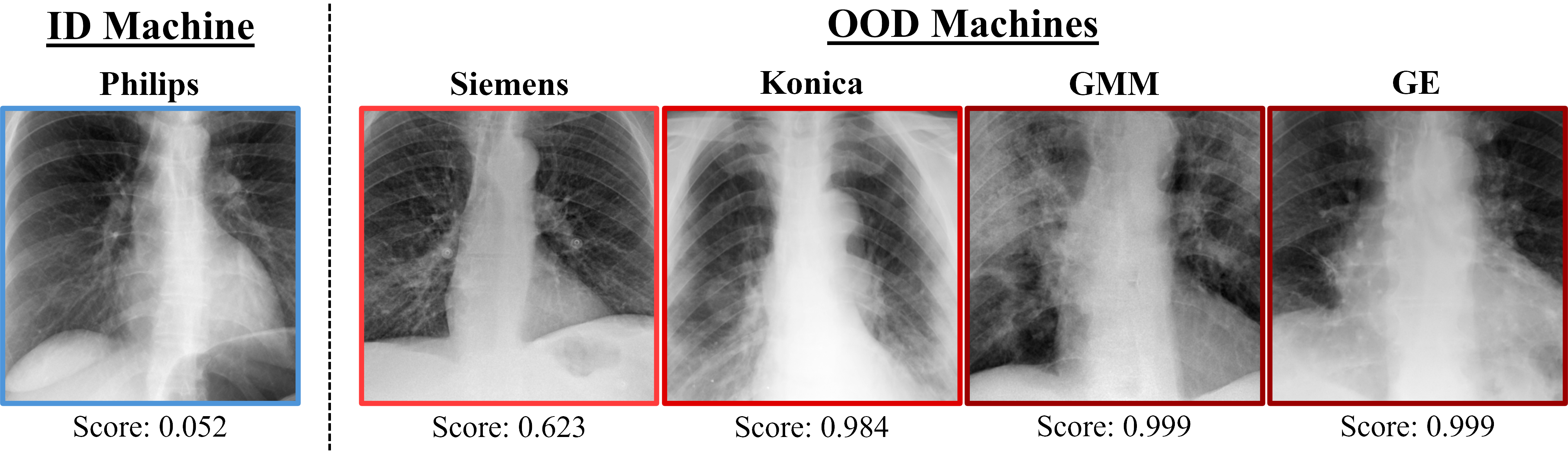

The results demonstrate that AdverX-Ray performs effectively across all ID sets, in contrast to both the VAE and GLOW models. GLOW exhibits poor performance due to a known issue where it assigns higher likelihoods to OOD images. Interestingly, this limitation is not consistent, as it only occurs with certain OOD machine models. AdverX-Ray consistently outperforms both VAE and GLOW across all test sets, except for the GE machine, where VAE achieves slightly better results.

| Methods | Siemens | Konica | Philips | GE | GMM | Average AUROC↑/FPR95↓ |

|---|---|---|---|---|---|---|

| VAE (ELBO) | 49.7 | 30.6 | 38.8 | 98.6 | 62.3 | 56.0 / 81.5 |

| GLOW (LL) | 31.4 | 34.2 | 18.9 | 88.1 | 90.6 | 52.7 / 95.2 |

| AdverX-Ray (Proposed) | 96.3 | 96.3 | 96.1 | 92.4 | 99.7 | 96.2 / 19.7 |

Additionally, while Table 1 shows that the VAE and GLOW models effectively detect high-frequency covariate shifts within the Philips X-ray dataset, their narrow focus on these components proves insufficient for distinguishing scans from different manufacturers. In contrast, the AdverX-Ray model, with its broader frequency coverage, successfully addresses this limitation. It is worth noting that AdverX-Ray achieves these results with a model size of just 15MB, significantly smaller than the VAE (126MB) and GLOW (120MB). The results are based on the average of five runs, using 64 random patches per image.

Examples of OOD scores generated by the AdverX-Ray model trained on the Philips machine scans.

@inproceedings{caetano2025adverx,

title={AdverX-Ray: ensuring x-ray integrity through frequency-sensitive adversarial VAEs},

author={Caetano, Francisco and Viviers, Christiaan and Filatova, Lena and van der Sommen, Fons and others},

booktitle={Medical Imaging 2025: Image Processing},

volume={13406},

pages={109--116},

year={2025},

organization={SPIE}

}